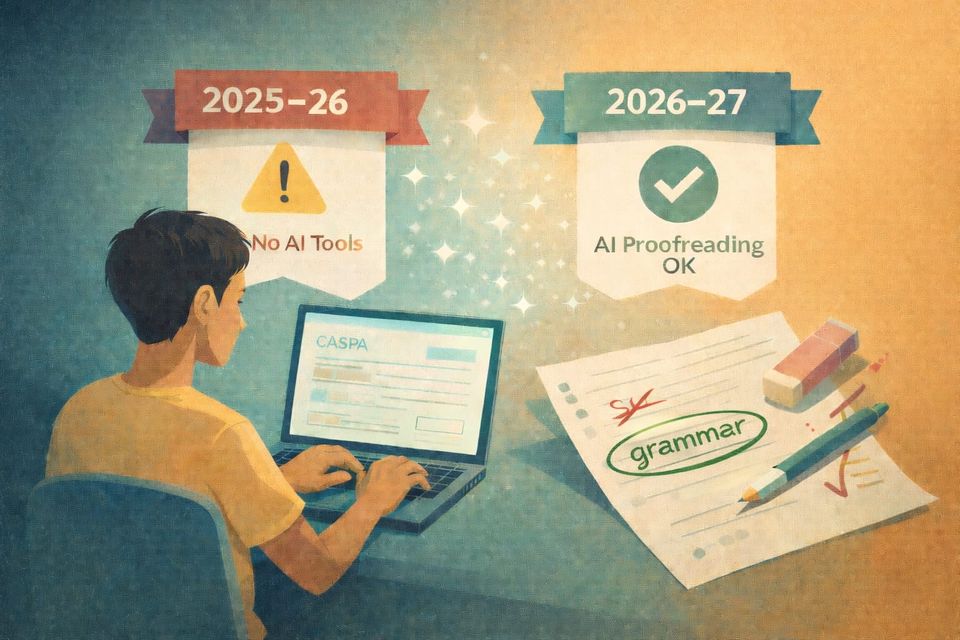

CASPA and Generative AI: What Programs Should Enforce in 2025–26, What Changes in 2026–27, and Why AI Detection Alone Isn’t Enough

CASPA’s AI rules are changing fast. Here’s what programs should enforce in 2025–26, what shifts in 2026–27, and why AI detection flags alone shouldn’t drive decisions.

Executive summary (for admissions teams)

- 2025–26 applicant agreement: applicants attest their written passages were not written/modified by any person or generative AI; and applicants are strictly prohibited from using generative AI to create/write/modify CASPA content.

- 2026–27 policy direction: applicants may consult “personal and professional resources,” including AI tools, for non-substantive changes (spelling/grammar) if the final submission reflects the applicant’s own writing/work/experiences—and applicants must follow institutional policies.

- Enforcement posture: PAEA urges caution with AI detection tools due to high false positives and will not initiate a CASPA investigation when the sole basis is an AI detection flag.

1) The 2025–26 standard: broad prohibition, broad attestation

In the 2025–26 CASPA applicant agreement:

- applicants certify that written passages are their own work and not written/modified by another person or generative AI

- applicants are “strictly prohibited” from using generative AI to create/write/modify content submitted in CASPA

- PAEA and PA programs reserve the right to use systems that detect AI-created/modified content

Operational challenge: the “modified by any other person” phrasing can be read expansively, potentially capturing conventional advising/editing. This is part of why the policy language evolves in 2026–27 toward “personal and professional resources.”

2) The 2026–27 shift: codifying “non-substantive” AI assistance

The 2026–27 Policies & Procedures include “Guidance on Generative AI in CASPA User Submissions,” noting AI’s ubiquity and potential benefits (brainstorming, proofreading) along with risks (accuracy, confidentiality).

The new certification language explicitly allows:

- consulting personal/professional resources, including AI, for non-substantive changes like spelling and grammar

- as long as the final submission accurately reflects the applicant’s own writing/work/experiences

- and the applicant follows institutional policies

Implication for programs: program policy becomes even more important—CASPA is setting a baseline, not standardizing institutional expectations.

3) AI detection tools: why “flagged by detector” should not drive adjudication

PAEA’s 2026–27 policy includes unusually direct language:

- current research indicates AI detection tools have high false-positive rates

- relying on detection as the sole basis can lead to unfair judgments

- PAEA will not initiate a CASPA investigation when the sole basis is a detection flag

Recommended stance

- Treat AI detectors as, at most, one weak signal (and often noise).

- Build a process centered on consistency, documentation, and applicant-centered due process.

- Focus on the content of personal statements. Unless experiences are fabricated, that's still valid and important information, regardless of how AI-polished the writing appears.

4) What programs can do instead: practical integrity measures

Here are policy-aligned options that reduce false positives and preserve fairness:

A) Publish a clear institutional AI policy

Since 2026–27 explicitly points applicants to institutional policies, clarity helps both sides.

Suggested elements:

- What is allowed (proofreading vs drafting)

- Whether disclosure is required/encouraged

- How the program evaluates authenticity concerns

- How applicants can respond if concerns arise

B) Use structured prompts/interviews to validate authorship

If writing authenticity is a concern, use:

- brief “explain your essay” interview prompts

- scenario-based questions linked to stated experiences

- consistency checks across experiences, LOR themes, and interview narratives

C) If you suspect a true violation, report with facts—don’t rely on detectors alone

The 2026–27 investigations policy describes reporting suspected violations and instructs programs to include relevant facts, dates, events, and documentation; reports can be emailed to PAEA at the CASPA investigations address.

5) FAQ (for program staff)

Can our program use AI detection tools?

CASPA notes programs reserve the right to use detection systems.

But PAEA urges caution due to false positives and will not open an investigation based solely on a detector flag.

What is CASPA’s direction for 2026–27?

Applicants may use AI tools for non-substantive edits like spelling/grammar, as long as the submission remains their own and institutional policies are followed.

Ready to discuss your admissions workflow strategy?

We’re helping PA programs modernize their admissions operations with AI-assisted tools built for speed, fairness, and transparency. Book a conversation →

— Meshwell Staff